TL;DR: We refactored a ~3,000-line monolithic saga into a pattern where the saga handles only coordination (saga data) while a second handler on the same message handles entity persistence. We are not using the Outbox or Transactional Session and have no plans to adopt them for now. Looking for feedback on whether this is a sound approach or if there are pitfalls we’re missing.

The Problem

We have a saga that orchestrates a process. Over time, the saga grew to ~3,000 lines because it was doing everything inside its Handle methods:

- Updating saga data (coordination state)

- Loading and mutating domain entities

- Calling

SaveChangeson the DbContext, often multiple times per handler, scattered across different code paths within the sameHandlemethod - Sending/publishing messages, often multiple times per handler as well

This created several issues:

- Violated Particular’s guidance — Saga handlers were persisting entity state directly, which David Boike described as a “somewhat dangerous antipattern”

- No separation of concerns — Saga coordination, domain logic, and infrastructure were all in one place

- Hard to reason about failures — If

SaveChangesfailed, saga data had already been modified in the same handler

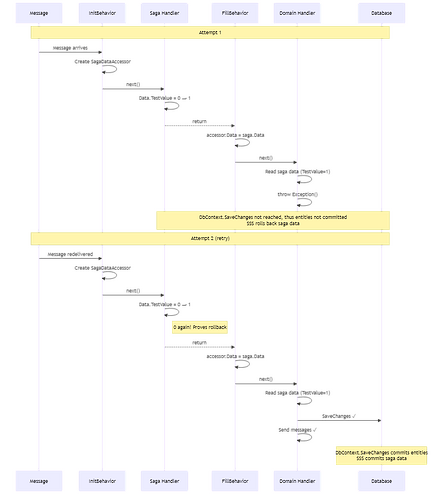

See the diagram at the end of the post.

The Solution: Dual Handler Pattern

We split each message’s processing into two handlers that both handle the same message type:

- Saga handler — Updates only saga data (coordination state). Lightweight, no entity work.

- Domain handler — Loads entities, applies domain transitions, calls

SaveChanges, sends messages. Reads saga data from the pipeline context when needed.

Both handlers execute within the same NServiceBus pipeline invocation.

Important: We are not using the Outbox or Transactional Session. Our entity persistence goes through EF Core’s SaveChanges within the handler, and saga persistence is managed by NServiceBus via SynchronizedStorageSession. We have no plans to adopt Outbox or Transactional Session for now.

See the diagram at the end of the post.

How It Works

1. Handler Ordering

We use ExecuteTheseHandlersFirst to guarantee the saga runs before the domain handler:

endpointConfig.ExecuteTheseHandlersFirst(typeof(Saga));

2. Pipeline Behaviors Bridge Saga Data to the Domain Handler

Two pipeline behaviors coordinate the data flow:

See the diagram at the end of the post.

InitBehavior — Creates a shared SagaDataAccessor in the pipeline context before any handler runs:

public class SagaDataAccessor

{

public SagaData? Data { get; set; }

}

public class InitBehavior : Behavior<IIncomingLogicalMessageContext>

{

public override async Task Invoke(IIncomingLogicalMessageContext context, Func<Task> next)

{

context.Extensions.Set(new SagaDataAccessor());

await next();

}

}

FillBehavior — After the saga handler runs, copies saga data into the shared accessor so the domain handler can read it:

public class FillBehavior : Behavior<IInvokeHandlerContext>

{

public override async Task Invoke(IInvokeHandlerContext context, Func<Task> next)

{

await next();

if (context.MessageHandler.Instance is Saga saga

&& context.Extensions.TryGet<SagaDataAccessor>(out var accessor))

{

accessor.Data = saga.Data;

}

}

}

3. Domain Handler Structure

Every domain handler follows the same four-step pattern:

See the diagram at the end of the post.

- Read saga data doing

var sagaData = context.Extensions.Get<SagaDataAccessor>().Data!;if needed - Call an application service — The service loads entities, applies domain logic, and tracks entity mutations. Critically, the service cannot write to the database and cannot send messages. It can only track entities (via a DbContext) and return a list of messages to be sent.

- SaveChanges — The handler persists all tracked entity mutations in a single transaction.

- Send messages — The handler dispatches the messages returned by the service in step 2.

This enforces a clear boundary: the service is a pure orchestrator (Functional Core), and the handler is the imperative shell that performs side effects.

4. Concrete Example

For an ExampleMessage, the saga captures coordination state and the domain handler does the entity work:

Saga handler (~10 lines) — coordination only:

public partial class Saga

{

public async Task Handle(ExampleMessage message, IMessageHandlerContext context)

{

if (Data.IsInCertainState)

{

Data.SomeEnum = message.SomeFlag ? EnumOptionA : EnumOptionB;

}

}

}

Domain handler (~15 lines) — follows the four-step pattern:

public class DomainHandler(DbContext dbContext, DomainService service) : IHandleMessages<ExampleMessage>

{

public async Task Handle(ExampleMessage message, IMessageHandlerContext context)

{

// 1. Read saga data

var sagaData = context.Extensions.Get<SagaDataAccessor>().Data!;

// 2. Call service — cannot write DB, cannot send messages, only tracks entities and returns messages to send

var messages = await service.ExecuteAsync(sagaData, context.CancellationToken);

// 3. Persist all tracked entity mutations

await dbContext.SaveChanges(context.CancellationToken);

// 4. Send messages returned by the service

await context.SendMessagesAsync(messages, context.CancellationToken);

}

}

Atomicity: What Happens on Failure?

We rely on SynchronizedStorageSession to guarantee that saga data and entity changes are committed or rolled back together.

See the diagram at the end of the post.

We verified this by deploying a test to a real environment (SQL Persistence + Azure Service Bus transport in ReceiveOnly transport transaction mode):

- Saga handler increments

Data.TestValuefrom 0 → 1 - Domain handler reads

TestValue, then throws - On retry, saga handler sees

TestValueis 0 again — provingSynchronizedStorageSessionrolled back the saga data mutation

Safety Guarantees

| Scenario | Behavior |

|---|---|

| Saga handler fails | Pipeline short-circuits. Domain handler never runs. |

Domain handler fails before or during SaveChanges |

Entity changes are never persisted, messages are never dispatched and SynchronizedStorageSession rolls back saga data. Both handlers retry together. |

| No saga instance found | NServiceBus skips the saga. We call DoNotContinueDispatchingCurrentMessageToHandlers() to prevent the domain handler from running. |

| Domain handler succeeds | SaveChanges commits entities. SynchronizedStorageSession commits saga data. Messages dispatched. |

Known Tradeoff: Post-SaveChanges Failures

We are aware that if SaveChanges succeeds but a failure occurs after (e.g., during message dispatch or saga data commit), we can end up with:

- Zombie records — Entity changes persisted to the database but the corresponding messages never sent

- Inconsistent saga data — Entity state advanced but saga data rolled back, leaving them out of sync

We accept this tradeoff.

Benefits We’ve Seen

- Saga handlers are trivial — Most are 3-10 lines. Easy to review, test, and reason about.

- Domain handlers are independently testable — They receive saga data as input and produce entity changes + messages as output. No saga infrastructure needed in tests.

- Functional Core / Imperative Shell — Domain logic is pure computation (no I/O). The domain handler is the imperative shell that calls

SaveChangesand sends messages. - Single SaveChanges — One transaction per message. No split-brain between saga storage and entity storage.

Diagram: Saga Data Accessor + Atomic Retry

Questions for the Community

-

Is this a sound use of

SynchronizedStorageSession? We rely on the fact that saga data mutations are rolled back when a subsequent handler in the same pipeline throws. Is this a documented guarantee, or are we depending on an implementation detail? -

Are there edge cases with

ExecuteTheseHandlersFirst+DoNotContinueDispatchingCurrentMessageToHandlers? We use this combination to ensure the saga always runs first and to prevent the domain handler from running when no saga instance is found. Any known pitfalls? -

Has anyone else used this pattern? We couldn’t find prior art for “two handlers on the same message where one is a saga and the other does entity work.” Is there a reason this isn’t more common?

-

Pipeline behavior ordering — We register two behaviors (

InitBehavioratIIncomingLogicalMessageContextandFillBehavioratIInvokeHandlerContext). Is there a cleaner way to bridge saga data to a second handler?

Would love to hear thoughts from @Andreas.Ohlund and @Daniel.Marbach on whether this aligns with the intended use of the saga infrastructure.

Using NServiceBus 9.x with SQL Persistence and Azure Service Bus transport in ReceiveOnly transport transaction mode. Not using Outbox or Transactional Session.