Hi,

My question is what is the recommended version of Particular.ServiceControl that complements the Particular.ServicePulse?

We were on an old version of this,Particular.ServiceControl 1.40.0 and Particular.ServicePulse 1.9.1. After reading the documentation, it was recommended to upgrade to Particular.ServiceControl 1.45 or later. I upgraded to Particular.ServiceControl-1.48.0 and Particular.ServicePulse-1.13.4.

Backed up the database and took snapshot of machine.

From then after, performed the upgrade to Particular.ServiceControl-2.1.4, and Particular.ServicePulse-1.14.4

However, there are some clarification needed:

From the servicecontrol.exe.config as shown below

<?xml version=“1.0” encoding=“utf-8”?>

<configuration>

<appSettings>

<add key=“Raven/Esent/MaxVerPages” value=“2048” />

<add key=“ServiceControl/DBPath” value=“D:\ServiceControl\DB” />

<add key=“ServiceControl/TransportType” value=“NServiceBus.MsmqTransport, NServiceBus.Core” />

<add key=“ServiceControl/HostName” value=“localhost” />

<add key=“ServiceControl/Port” value=“33333” />

<add key=“ServiceControl/DatabaseMaintenancePort” value=“33334” />

<add key=“ServiceControl/LogPath” value=“D:\ServiceControl\Logs” />

<add key=“ServiceControl/ForwardAuditMessages” value=“False” />

<add key=“ServiceControl/ForwardErrorMessages” value=“False” />

<add key=“ServiceControl/AuditRetentionPeriod” value=“10.00:00:00” />

<add key=“ServiceControl/ErrorRetentionPeriod” value=“10.00:00:00” />

<add key=“ServiceBus/AuditQueue” value=“audit” />

<add key=“ServiceBus/ErrorQueue” value=“error” />

</appSettings>

<runtime>

<gcServer enabled=“true” />

</runtime>

</configuration>

I get the following in the logs as indicated

- in the ravenlogfile this appears,

2018-09-20 09:30:29.3146|91|Warn|Raven.Client.Connection.Async.AsyncServerClient|Was unable to fetch topology from primary node http:// localhost also there is no cached topology

- in the logfile, this appears

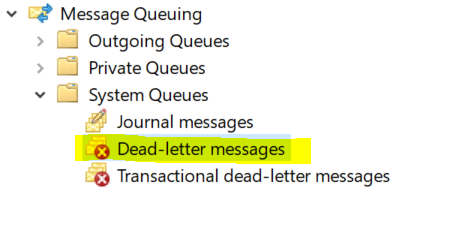

2018-09-20 09:13:47.0995|111|Warn|ServiceControl.MSMQ.DLQMonitor.CheckDeadLetterQueue|36 messages in the Dead Letter Queue on MACHINE. Please submit a support ticket to Particular using support@particular.net if you would like help from our engineers to ensure no message loss while resolving these dead letter messages.

What would be the recommended steps to resolve the above issues?

Have also encountered where the messages are not processed and appears hung.

- MSMQ is stuck on starting, this resorted into having to kill the process.

- Delete the MQInSeqs.lg1, MQInSeqs.lg2, MQTrans.lg1, MQTrans.lg2, QMLog file in C:\Windows\System32\msmq\storage

- HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\MSMQ\Parameters, set the value of LogDataCreated to zero.

- Reboot

Then I perform a database compaction and clean up according to this link Compacting RavenDB 3.5 • ServiceControl • Particular Docs in order to resolve the issue.

Any suggestions, tips would be greatly appreciated.

Thanks,

Tom.