With the topology changes of NServiceBus.Transport.AzureServiceBus version 5, it seems that there are a couple of features documented in the main NServiceBus section that are no longer fully supported. Specifically Polymorphic routing (https://docs.particular.net/nservicebus/messaging/dynamic-dispatch-and-routing), with or without Interfaces as messages (https://docs.particular.net/nservicebus/messaging/messages-as-interfaces).

In the previous topology, these features worked out of the box, no additional configuration needed, simply by creating rules filtering on the NServiceBus.EnclosedMessageTypes header. In contrast, the new topology will not work with these features out of the box, requiring manual configuration on the endpoint and/or on the Azure Service Bus itself. Any misconfiguration might cause message loss.

What’s more. If I’m not mistaken, it’s not possible to replicate the previous behaviour without some serious modification of the documented topologies. To elaborate, I’ll paraphrase a few sections of the documentation for the Topology, and provide reasons for why I think they are unsatisfactory. If my reasoning contains any mistakes, please let me know.

Interface based inheritance

In the section Interface based inheritance in the Topology documentation (https://docs.particular.net/transports/azure-service-bus/topology#subscription-rule-matching-interface-based-inheritance) are the first notes on implementing these features.

Documentation says

There are a couple of suggestions on how to implement the topology for the following messages:

namespace Shipping;

interface IOrderAccepted : IEvent { }

interface IOrderStatusChanged : IEvent { }

class OrderAccepted : IOrderAccepted, IOrderStatusChanged { }

class OrderDeclined : IOrderAccepted, IOrderStatusChanged { }

An endpoint SubscriberA subscribing to IHandleMessages<IOrderStatusChanged> could configure like so:

topology.SubscribeTo<IOrderStatusChanged>("Shipping.OrderAccepted");

topology.SubscribeTo<IOrderStatusChanged>("Shipping.OrderDeclined");

My Interpretation

This would require the endpoint SubscriberA to need to know (and keep up to date!) about all different implementers of IOrderStatusChanged. In a large decentralised system, this might be hard to maintain. In our design, these interfaces cross domain boundaries, being consumed by entirely different departments. Furthermore, each consuming endpoint would need to maintain this list on their own. Missing a type in this list would mean losing that message.

Documentation says

So instead, we can turn it around, and tell the publishing endpoint to do something different:

topology.PublishTo<OrderAccepted>("Shipping.IOrderStatusChanged");

topology.PublishTo<OrderDeclined>("Shipping.IOrderStatusChanged");

Now SubscriberA can simply use the default behaviour to subscribe to IOrderStatusChanged and everything works.

My Interpretation

With this, how would consumption for IHandleMessages<IOrderAccepted> now look like? By default, this would consume the topic IOrderAccepted. But nothing is publishing to those topics.

The documentation is not very explicit about it, but my conclusion is that this suggestion would only work if the handlers for IHandleMessages<IOrderAccepted> and IHandleMessages<IOrderStatusChanged live in the same endpoint. And this would only work as long as all messages implement both these interfaces. A messagetype implementing just IOrderAccepted would need different special treatment.

This seems like a weird requirement to us. The idea we figured was behind interface based inheritance was that you can apply specific business concerns to the same message in a mix-in way. Logically, each of these interfaces would be a different concern. Think “Main order fullfillment”, “newsletter signup”, “account creation”, and so forth. These different concerns would live in separate endpoints.

Full Multiplexing with filters

The next section with guidance on implementing a system like this is a bit further down. The section Multiplexed Derived Events repeats the previous parts, without providing new information, but Filtering within a Multiplexed Topic (https://docs.particular.net/transports/azure-service-bus/topology#subscription-rule-matching-advanced-multiplexing-strategies-filtering-within-a-multiplexed-topic) adds filtering.

Documentation says

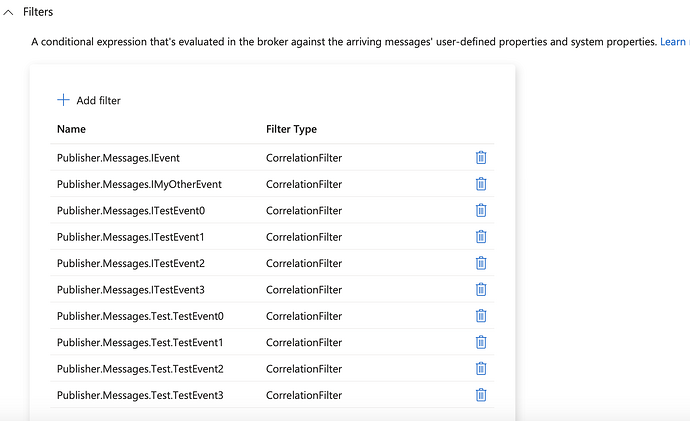

The guidance boils down to: publish every related message to a single topic, and filter based on endpoint’s needs to get the messages you want. Preferably using a CorrelationFilter for performance reasons.

My Interpretation

I’m not overly familiar with CorrelationFilters, but based on the MSDN docs (https://learn.microsoft.com/en-us/azure/service-bus-messaging/topic-filters#correlation-filters) it seems to me that these matching rules only perform full string equality checks on properties. We can’t search through the NServiceBus.EnclosedMessageTypes property without using a SqlFilter. We could do that, but then we’re back to the old Topology.

We might be able to come up with an alternative way of creating properties for individual enclosed message types and creating CorrelationFilters on those, but either way, this would be something that a consumer of NServiceBus would have to figure out and implement themselves. Both setting these values in properties of outgoing messages, and more importantly provisioning the required filters in Azure.

Installers

There’s also an issue with installer support. Before, we could rely on installers to provision the entire required topology without our intervention. We run the installers in a separate, more highly privileged step in our deploy pipeline. If we were to have to manually provision certain aspects of the topology, this would introduce additional complexity, maintenance and room for error.

The Azure Service Bus transport configuration also does not allow us to extend the topology installation to provision these filters programmatically.

I have to acknowledge that there is a currently open improvement feature on the github (prompted by an earlier question of mine on StackOverflow) that proposes to add installer support for correlation filters.

So, what’s the question?

The documentation suggests that these features (interface based inheritance and routing based on inheritance) are supported by NServiceBus, but it seems like the new Azure Service Bus transport topology only supports this by downgrading the experience (requiring manual configuration and infrastructure changes outside installer support) without any meaningful gain (implementing a working solution results in more or less the same topology as the old single topic topology).

We are interested to know if Particular recognises the same gap we observe between documented features and their actual support via the Azure Service Bus Transport, and if so - what are the plans to bridge this gap?

Here are some solutions we can identify, in order of descending preference:

1. Instead of phasing out the “bundle” topology - support both topologies long-term and let consumers choose their desired topology.

Inheritance is supported out of the box in the “bundle” topology. No additional configuration or infra needed. Our understanding of the MS-documented limits leads us to believe that in order to hit said limits an organisation would have to have an excessively high amount of service bus entities and/or traffic. With near-certainty, our own organisation is unlikely to come anywhere near any of the reported limits in the foreseeable future.

We have yet to encounter any noticeable performance or latency issues associated with the SQL subscription rules. Granted, our organisation does not yet have a massive amount of subscriptions or filters. But even so, since messaging systems are more geared towards eventual consistency guarantees than towards speed of execution, we would like to raise the doubt whether any but the most complex systems is likely to yield unacceptable performance or latency observed on the overall system.

We find it may be highly desirable for many organisations of varying sizes, ours included, to keep this option available long-term.

2. Introduce an additional / alternative topic-per-event topology which provides the inheritance feature out of the box.

As we understand from the docs, the topology change was introduced aiming to satisfy additional requirements / provide additional benefits over the “bundle” topology: (A) Reduce filtering overhead, boosting performance and scalability. (B) Mitigate the risk of hitting Azure Service Bus limits. (C) Reduce failure domain size in case of hitting a topic size quota. As a result, the current topology was introduced, which consists of a single topic per the most concrete event type. However, this is not the only topology that can satisfy the above requirements. Trying to solve the puzzle of satisfying said requirements while “natively” supporting inheritance, we have experimented with a few alternative topologies, some of which were concluded to be quite adequate. If Particular is interested, we are more than willing to share our ideas.

If a suitable topology candidate is found, Particular may wish to consider here as well whether to provide the new topology as an evolution of the current one (aiming to replace it in a future version), or as a configurable option allowing organisations to favour either native inheritance support or topology simplicity.

3. Enhance extensibility points, and let consumers implement their own topology.

Currently, it’s not possible to extend the TopicTopology class due to internal abstract methods. While a lot of topology setup could be performed by creating a Feature modifying the topology configuration, installer support is limited because the transport keeps the ServiceBusManagementClient internal.

By opening up the topology types for additional extensibility, Particular could let consumers implement their own home-brew solutions to such problems.

While this solution could possibly work (and may be a nice improvement regardless), we naturally consider this the least desirable solution. While it allows organisations to add inheritance support, it does not provide it out of the box, and would indicate that Particular is willing to drop native support for this feature for the Azure Service Bus transport going forward. We can only hope that Particular would be willing to invest the effort to re-incorporate support for this feature into this prominent transport. We very much agree with the benefits of resilience, extensibility and maintainability as documented in dynamic dispatch and polymorphic routing, and all in all we find these features quite a pillar differentiating NServiceBus from competing offerings.

4. Another solution we haven’t considered?

![]()