I’m running NSB 8 in Azure Function v4 host (.NET6 isolated) using Azure Service Bus with the latest NuGet versions of NSB.

I have 3 scheduled functions that run every 15min to send a couple of messages.

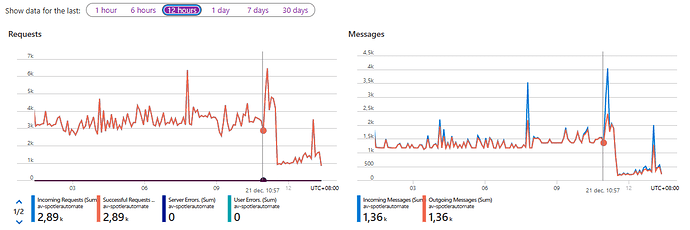

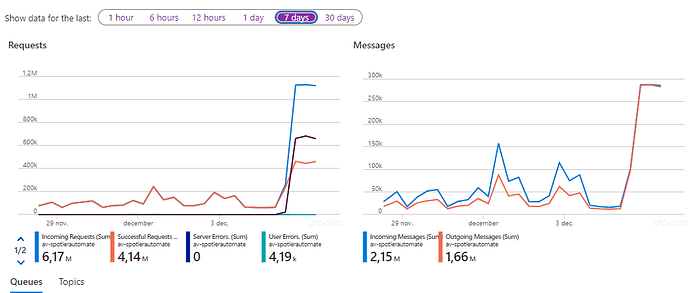

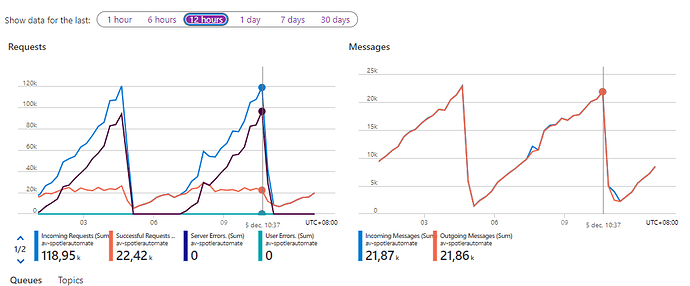

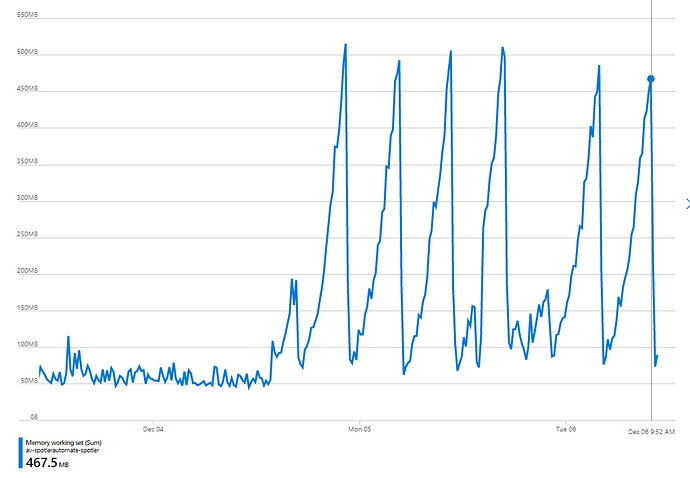

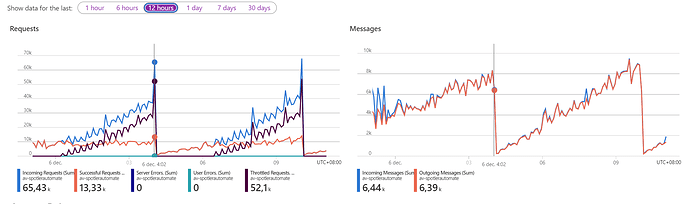

After upgrade to NSB 8 on Saturday 1PM local time I’m experiencing strange behavior. Incoming+outgoing is normally below <1k-2k is now growing to 44k within 6h and it crashes with error below and restarts.

Failed to receive a message on pump 'Processor-Main-AV.Spotler-5f94b9c4-64d8-4854-8ba7-5448d81a5210' listening on 'AV.Spotler' connected to 'av-spotlerautomate.servicebus.windows.net' due to 'Receive'. Exception: Azure.Messaging.ServiceBus.ServiceBusException: Cannot allocate more handles. The maximum number of handles is 255. (QuotaExceeded). For troubleshooting information, see https://aka.ms/azsdk/net/servicebus/exceptions/troubleshoot.

at Azure.Messaging.ServiceBus.Amqp.AmqpConnectionScope.CreateReceivingLinkAsync(String entityPath, String identifier, AmqpConnection connection, Uri endpoint, TimeSpan timeout, UInt32 prefetchCount, ServiceBusReceiveMode receiveMode, String sessionId, Boolean isSessionReceiver, CancellationToken cancellationToken)

at Azure.Messaging.ServiceBus.Amqp.AmqpConnectionScope.OpenReceiverLinkAsync(String identifier, String entityPath, TimeSpan timeout, UInt32 prefetchCount, ServiceBusReceiveMode receiveMode, String sessionId, Boolean isSessionReceiver, CancellationToken cancellationToken)

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver.OpenReceiverLinkAsync(TimeSpan timeout, UInt32 prefetchCount, ServiceBusReceiveMode receiveMode, String identifier, CancellationToken cancellationToken)

at Microsoft.Azure.Amqp.FaultTolerantAmqpObject`1.OnCreateAsync(TimeSpan timeout, CancellationToken cancellationToken)

at Microsoft.Azure.Amqp.Singleton`1.GetOrCreateAsync(TimeSpan timeout, CancellationToken cancellationToken)

at Microsoft.Azure.Amqp.Singleton`1.GetOrCreateAsync(TimeSpan timeout, CancellationToken cancellationToken)

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver.ReceiveMessagesAsyncInternal(Int32 maxMessages, Nullable`1 maxWaitTime, TimeSpan timeout, CancellationToken cancellationToken)

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver.ReceiveMessagesAsyncInternal(Int32 maxMessages, Nullable`1 maxWaitTime, TimeSpan timeout, CancellationToken cancellationToken)

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver.<>c.<<ReceiveMessagesAsync>b__41_0>d.MoveNext()

--- End of stack trace from previous location ---

at Azure.Messaging.ServiceBus.ServiceBusRetryPolicy.RunOperation[T1,TResult](Func`4 operation, T1 t1, TransportConnectionScope scope, CancellationToken cancellationToken, Boolean logRetriesAsVerbose)

at Azure.Messaging.ServiceBus.ServiceBusRetryPolicy.RunOperation[T1,TResult](Func`4 operation, T1 t1, TransportConnectionScope scope, CancellationToken cancellationToken, Boolean logRetriesAsVerbose)

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver.ReceiveMessagesAsync(Int32 maxMessages, Nullable`1 maxWaitTime, CancellationToken cancellationToken)

at Azure.Messaging.ServiceBus.ServiceBusReceiver.ReceiveMessagesAsync(Int32 maxMessages, Nullable`1 maxWaitTime, Boolean isProcessor, CancellationToken cancellationToken)

at Azure.Messaging.ServiceBus.ReceiverManager.ReceiveAndProcessMessagesAsync(CancellationToken cancellationToken)

AzureFunctions_FunctionName CheckForUpdatedContactsFunction

Azure.Messaging.ServiceBus.ServiceBusException:

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver+<RenewMessageLockInternalAsync>d__56.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver+<>c+<<RenewMessageLockAsync>b__55_0>d.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at Azure.Messaging.ServiceBus.ServiceBusRetryPolicy+<RunOperation>d__21`2.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at Azure.Messaging.ServiceBus.ServiceBusRetryPolicy+<RunOperation>d__21`2.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at Azure.Messaging.ServiceBus.Amqp.AmqpReceiver+<RenewMessageLockAsync>d__55.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at Azure.Messaging.ServiceBus.ServiceBusReceiver+<RenewMessageLockAsync>d__62.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at Azure.Messaging.ServiceBus.ServiceBusReceiver+<RenewMessageLockAsync>d__61.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification (System.Private.CoreLib, Version=6.0.0.0, Culture=neutral, PublicKeyToken=7cec85d7bea7798e)

at Azure.Messaging.ServiceBus.ReceiverManager+<RenewMessageLockAsync>d__18.MoveNext (Azure.Messaging.ServiceBus, Version=7.8.0.0, Culture=neutral, PublicKeyToken=92742159e12e44c8)

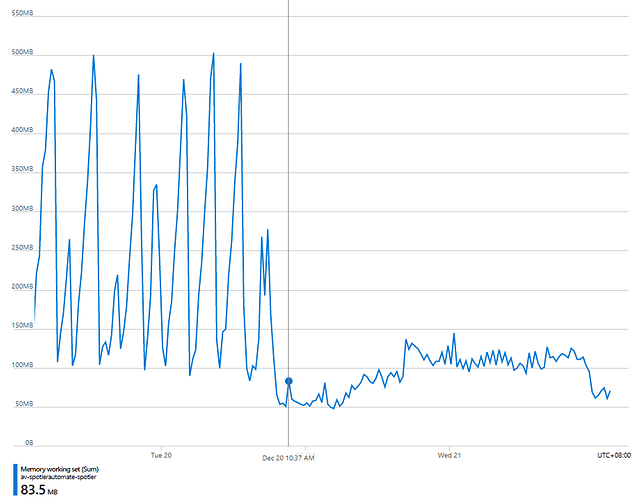

This is 7days request and messages where you can see it going up at the end when I upgraded to NSB 8.

Here is the detail from the last 12hours were its buidling up and than comes with that expection.

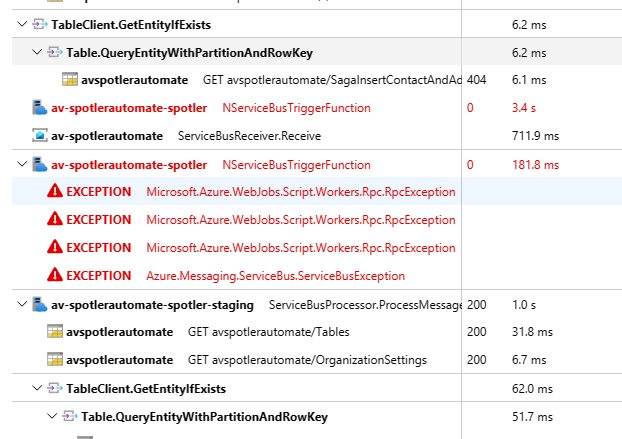

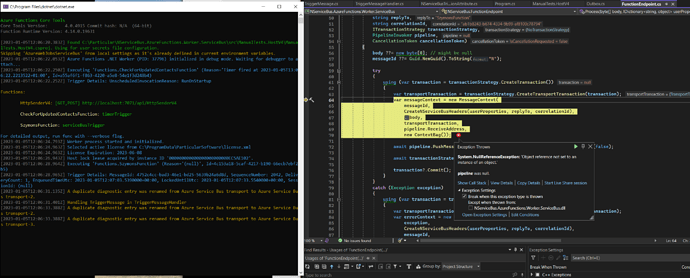

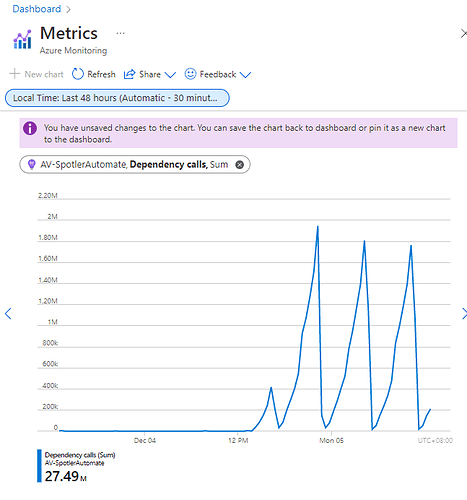

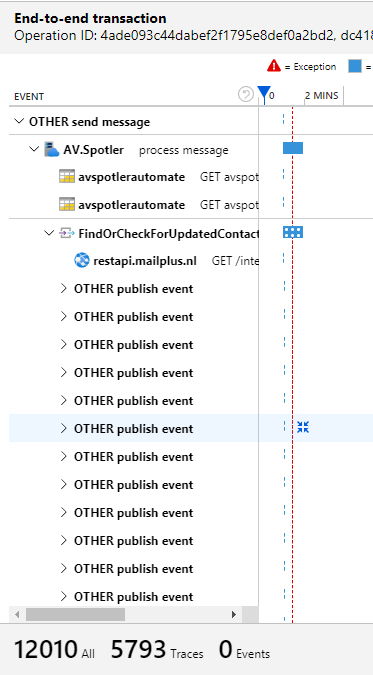

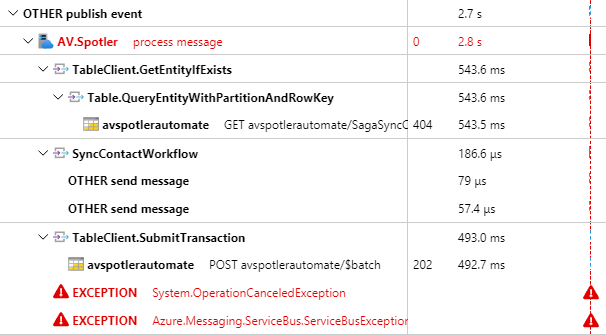

The number of dependencies seems to be building up. I am also using the new OpenTelemetry in NSB 8.

I’m still looking into the functions that are schedules and see what could case this, but they haven’t been changed for months

Any idea or direction what could be the cause of this?

. See the error below.

. See the error below.